Category: Medicine

Could gambling save science?

U.S. economist Robin Hanson posed this question in the title of an article published in 1995. In it he suggested replacing the classic review process with a market-based alternative. Instead of peer review, bets could decide which projects will be supported or which scientific questions prioritized. In these so-called “prediction” markets, individuals stake “bets” on a particular result or outcome. The more people trade on the marketplace, the more precise will be the prediction of outcome, based as it is on the aggregate information of the participants. The prediction market thus serves the intellectual swarms. We know that from sport bets and election prognoses. But in science? Sounds totally crazy, but it isn’t. Just now it is making its entry into various branches of science. How does it function, and what does it have going for it? Continue reading

U.S. economist Robin Hanson posed this question in the title of an article published in 1995. In it he suggested replacing the classic review process with a market-based alternative. Instead of peer review, bets could decide which projects will be supported or which scientific questions prioritized. In these so-called “prediction” markets, individuals stake “bets” on a particular result or outcome. The more people trade on the marketplace, the more precise will be the prediction of outcome, based as it is on the aggregate information of the participants. The prediction market thus serves the intellectual swarms. We know that from sport bets and election prognoses. But in science? Sounds totally crazy, but it isn’t. Just now it is making its entry into various branches of science. How does it function, and what does it have going for it? Continue reading

Your Lab is Closer to the Bedside Than You Think

With a half-page article written about him and his study, an Israeli radiologist unknown until then made it into the New York Times (NYT 2009). Dr. Yehonatan Turner presented computer-tomographic scans (CTs) to radiologists and asked them to make a diagnosis. The catch: Along with the CT a current portrait photograph of the patient was presented to the physicians. Remember, radiologists very often do not see their patients, they make their diagnosis in a dark room staring at a screen. Dr. Turner in his study used a smart cross-over design: He first showed the CT together with a portrait photograph of the patient to one group of radiologists. Three months later the same group had to make a diagnosis using the same CT, but without the photo. Another group of radiologists were first given only the CT and then, three months later the CT with photo. A further control group examined only the CTs, as in routine practice. The hypothesis: When a radiologist is exposed to the individual patient, and not only to an anatomical finding on a scan, she will be more conscious of her own responsibility, hence findings will be more thorough and diagnosis more accurate. And in fact, this is what he found. The radiologists reported that they had more empathy with the patient, and that they “felt like doctors”. And they spotted more irregularities and pathological findings when they had the CT and photo in front of them than when they were only looking at the CT (Turner and Hadas-Halpern 2008).

With a half-page article written about him and his study, an Israeli radiologist unknown until then made it into the New York Times (NYT 2009). Dr. Yehonatan Turner presented computer-tomographic scans (CTs) to radiologists and asked them to make a diagnosis. The catch: Along with the CT a current portrait photograph of the patient was presented to the physicians. Remember, radiologists very often do not see their patients, they make their diagnosis in a dark room staring at a screen. Dr. Turner in his study used a smart cross-over design: He first showed the CT together with a portrait photograph of the patient to one group of radiologists. Three months later the same group had to make a diagnosis using the same CT, but without the photo. Another group of radiologists were first given only the CT and then, three months later the CT with photo. A further control group examined only the CTs, as in routine practice. The hypothesis: When a radiologist is exposed to the individual patient, and not only to an anatomical finding on a scan, she will be more conscious of her own responsibility, hence findings will be more thorough and diagnosis more accurate. And in fact, this is what he found. The radiologists reported that they had more empathy with the patient, and that they “felt like doctors”. And they spotted more irregularities and pathological findings when they had the CT and photo in front of them than when they were only looking at the CT (Turner and Hadas-Halpern 2008).

So how about showing researchers in basic and preclinical biomedicine photos of patients with the disease they are currently investigating in a model of the disease? Continue reading

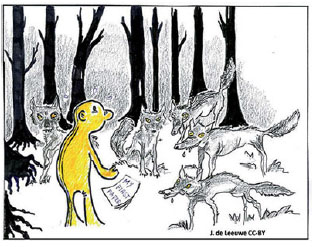

Predators in the (paper) forest

It struck at the end of July. A ‘scandal’ in science shook the Republic. Research by the NDR (Norddeutscher Rundfunk), NDR (Westdeutscher Rundfunk) and the Süddeutsche Zeitung revealed that German scientists are involved in a “worldwide scandal”. More that 5000 scientists in German universities, institutes and federal authorities had, with public funds, published their work in on-line pseudoscientific publishing houses that do not comply with the basic rules and for assuring scientific quality. The public and not just a few scientists heard for the first time about “predatory publishing houses” and “predatory journals”.

It struck at the end of July. A ‘scandal’ in science shook the Republic. Research by the NDR (Norddeutscher Rundfunk), NDR (Westdeutscher Rundfunk) and the Süddeutsche Zeitung revealed that German scientists are involved in a “worldwide scandal”. More that 5000 scientists in German universities, institutes and federal authorities had, with public funds, published their work in on-line pseudoscientific publishing houses that do not comply with the basic rules and for assuring scientific quality. The public and not just a few scientists heard for the first time about “predatory publishing houses” and “predatory journals”.

Predatory publishing houses, whose presentation in phishing mails is quite professional, offer scientists Open Access (OA) publication of their scientific studies at a cost, whereby they imply that their papers will be peer reviewed. No peer review is carried out, and the articles are published on the web site of these “publishing houses”, which however are not listed in the usual search engines such as PubMed. Every scientist in Germany finds several such invitations per day in his or her e-mails. If you are a scientist and receive none, you should be worried about it. Continue reading

No scientific progress without non-reproducibility?

Slides of my talk at the FENS Satellite-Event ‘How reproducible are your data?’ at Freie Universität, 6. July 2018, Berlin

Slides of my talk at the FENS Satellite-Event ‘How reproducible are your data?’ at Freie Universität, 6. July 2018, Berlin

- Let’s get this out of the way: Reproducibility is a cornerstone of science: Bacon, Boyle, Popper, Rheinberger

- A ‘lexicon’ of reproducibility: Goodman et al.

- What do we mean by ‘reproducible’? Open Science collaboration, Psychology replication

- Reproducible – non reproducible – A false dichotomy: Sizeless science, almost as bad as ‘significant vs non-significant’

- The emptiness of failed replication? How informative is non-replication?

- Hidden moderators – Contextual sensitivity – Tacit knowledge

- “Standardization fallacy”: Low external validity, poor reproducibility

- The stigma of nonreplication (‘incompetence’)- The stigma of the replicator (‘boring science’).

- How likely is strict replication?

- Non-reproducibility must occur at the scientific frontier: Low base rate (prior probability), low hanging fruit already picked: Many false positives – non-reproducibility

- Confirmation – weeding out the false positives of exploration

- Reward the replicators and the replicated – fund replications. Do not stigmatize non-replication, or the replicators.

- Resolving the tension: The Siamese Twins of discovery & replication

- Conclusion: No scientific progress without nonreproducibility: Essential non-reproducibility vs . detrimental non-reproducibility

- Further reading

Open Science Collaboration, Psychology Replication, Science. 2015 ;349(6251):aac4716

Goodman et al. Sci Transl Med. 2016;8:341ps12.

https://dirnagl.com/2018/05/16/can-non-replication-be-a-sin/

https://dirnagl.com/2017/04/13/how-original-are-your-scientific-hypotheses-really

Train PIs and Professors!

There is a lot of thinking going on today about how research can be made more efficient, more robust, and more reproducible. At the top of the list are measures for improving internal validity (for example randomizing and blinding, prespecified inclusion and exclusion criteria etc.), measures for increasing sample sizes and thus statistical power, putting an end to the fetishization of the p-value, and open access to original data (open science). Funders and journals are raising the bar for applicants and authors by demanding measures to safeguard the validity of the research submitted to them.

There is a lot of thinking going on today about how research can be made more efficient, more robust, and more reproducible. At the top of the list are measures for improving internal validity (for example randomizing and blinding, prespecified inclusion and exclusion criteria etc.), measures for increasing sample sizes and thus statistical power, putting an end to the fetishization of the p-value, and open access to original data (open science). Funders and journals are raising the bar for applicants and authors by demanding measures to safeguard the validity of the research submitted to them.

Students and young researchers have taken note, too. I teach, among other things, statistics, good scientific practice and experimental design and am impressed every time by the enthusiasm of the students and young post docs, and how they leap into the adventure of their scientific projects with the unbent will to “do it right”. They soak up suggestions for improving reproducibility and robustness of their research projects like a dry sponge soaks up water. Often however the discussion is in the end not satisfying, especially when we discuss students’ own experiments and approaches to research work. I often hear: “That’s all very good and fine, but it won’t get by with my group leader.” Group leaders would tell them: “That is the way we have always done that, and it got us published in Nature and Science”, “If we do it the way you suggest, it won’t get through the review process”, or “We then could only get it published in PLOS One (or Peer J, F1000 Research etc.) and then the paper will contaminate your CV”, etc.

I often wish that not only the students would be sitting in the seminar room, but also their supervisors with them! Continue reading

Can (Non)-Replication be a Sin?

I failed to reproduce the results of my experiments! Some of us are haunted by this horror vision. The scientific academies, the journals and in the meantime the sponsors themselves are all calling for reproducibility, replicability and robustness of research. A movement for “reproducible science” has developed. Sponsorship programs for the replication of research papers are now in the works.In some branches of science, especially in psychology, but also in fields like cancer research, results are now being systematically replicated… or not, thus we are now in the throws of a “reproducibility crisis”.

I failed to reproduce the results of my experiments! Some of us are haunted by this horror vision. The scientific academies, the journals and in the meantime the sponsors themselves are all calling for reproducibility, replicability and robustness of research. A movement for “reproducible science” has developed. Sponsorship programs for the replication of research papers are now in the works.In some branches of science, especially in psychology, but also in fields like cancer research, results are now being systematically replicated… or not, thus we are now in the throws of a “reproducibility crisis”.

Now Daniel Fanelli, a scientist who up to now could be expected to side with those who support the reproducible science movement, has raised a warning voice. In the prestigious Proceedings of the National Academy of Sciences he asked rhetorically: “Is science really facing a reproducibility crisis, and if so, do we need it?” So todayon the eve, perhaps, of a budding oppositional movement, I want to have a look at some of the objections to the “reproducible science” mantra. Is reproducibility of results really the fundament of scientific methods? Continue reading

When you come to a fork in the road: Take it

It is for good reason that researchers are the object of envy. When not stuck with bothersome tasks such as grant applications, reviews, or preparing lectures, they actually get paid for pursuing their wildest ideas! To boldly go where no human has gone before! We poke about through scientific literature, carry out pilot experiments that surprisingly almost always succeed. Then we do a series of carefully planned and costly experiments. Sometimes they turn out well, often not, but they do lead us into the unknown. This is how ideas become hypotheses; one hypothesis leads to those that follow, and voila, low and behold, we confirm them! In the end, sometimes only after several years and considerable wear and tear on personnel and material, we manage then to weave a “story” out of them (see also). Through a complex chain of results the story closes with a “happy end”, perhaps in the form of a new biological mechanism, but at least as a little piece to fit the puzzle, and it is always presented to the world by means of a publication. Sometimes even in one of the top journals. Continue reading

It is for good reason that researchers are the object of envy. When not stuck with bothersome tasks such as grant applications, reviews, or preparing lectures, they actually get paid for pursuing their wildest ideas! To boldly go where no human has gone before! We poke about through scientific literature, carry out pilot experiments that surprisingly almost always succeed. Then we do a series of carefully planned and costly experiments. Sometimes they turn out well, often not, but they do lead us into the unknown. This is how ideas become hypotheses; one hypothesis leads to those that follow, and voila, low and behold, we confirm them! In the end, sometimes only after several years and considerable wear and tear on personnel and material, we manage then to weave a “story” out of them (see also). Through a complex chain of results the story closes with a “happy end”, perhaps in the form of a new biological mechanism, but at least as a little piece to fit the puzzle, and it is always presented to the world by means of a publication. Sometimes even in one of the top journals. Continue reading

Of Mice, Macaques and Men

Tuberculosis kills far more than a million people worldwide per year. The situation is particularly problematic in southern Africa, eastern Europe and Central Asia. There is no truely effective vaccination for tuberculosis (TB). In countries with a high incidence, a live vaccination is carried out with the diluted vaccination strain Bacillus Calmette-Guérin (BCG), but BCG gives very little protection against tuberculosis of the lungs, and in all cases the vaccination is highly variable and unpredictable. For years, a worldwide search has been going on for a better TB vaccination.

Tuberculosis kills far more than a million people worldwide per year. The situation is particularly problematic in southern Africa, eastern Europe and Central Asia. There is no truely effective vaccination for tuberculosis (TB). In countries with a high incidence, a live vaccination is carried out with the diluted vaccination strain Bacillus Calmette-Guérin (BCG), but BCG gives very little protection against tuberculosis of the lungs, and in all cases the vaccination is highly variable and unpredictable. For years, a worldwide search has been going on for a better TB vaccination.

Recently, the British Medical Journal has published an investigation in which serious charges have been raised against researchers and their universities: conflicts of interest, animal experiments of questionable quality, selective use of data, deception of grant-givers and ethics commissions, all the way up to endangerment of study participants. There was also a whistle blower… who had to pack his bags. It all happened in Oxford, at one of the most prestigious virological institutes on earth, and the study on humans was carried out on infants of the most destitute layers of the population. Let’s have a closer look at this explosive mix in more detail, for we have much to learn from it about

- the ethical dimension of preclinical research and the dire consequences that low quality in animal experiments and selective reporting can have;

- the important role of systematic reviews of preclinical research, and finally also about

- the selective (or non) availability and scrutiny of preclinical evidence when commissions and authorities decide on clinical studies.

Is Translational Stroke Research Broken, and if So, How Can We Fix It?

Based on research, mainly in rodents, tremendous progress has been made in our basic understanding of the pathophysiology of stroke. After many failures, however, few scientists today deny that bench-to-bedside translation in stroke has a disappointing track record. I here summarize many measures to improve the predictiveness of preclinical stroke research, some of which are currently in various stages of implementation: We must reduce preventable (detrimental) attrition. Key measures for this revolve around improving preclinical study design. Internal validity must be improved by reducing bias; external validity will improve by including aged, comorbid rodents of both sexes in our modeling. False-positives and inflated effect sizes can be reduced by increasing statistical power, which necessitates increasing group sizes. Compliance to reporting guidelines and checklists needs to be enforced by journals and funders. Customizing study designs to exploratory and confirmatory studies will leverage the complementary strengths of both modes of investigation. All studies should publish their full data sets. On the other hand, we should embrace inevitable NULL results. This entails planning experiments in such a way that they produce high-quality evidence when NULL results are obtained and making these available to the community. A collaborative effort is needed to implement some of these recommendations. Just as in clinical medicine, multicenter approaches help to obtain sufficient group sizes and robust results. Translational stroke research is not broken, but its engine needs an overhauling to render more predictive results.

Based on research, mainly in rodents, tremendous progress has been made in our basic understanding of the pathophysiology of stroke. After many failures, however, few scientists today deny that bench-to-bedside translation in stroke has a disappointing track record. I here summarize many measures to improve the predictiveness of preclinical stroke research, some of which are currently in various stages of implementation: We must reduce preventable (detrimental) attrition. Key measures for this revolve around improving preclinical study design. Internal validity must be improved by reducing bias; external validity will improve by including aged, comorbid rodents of both sexes in our modeling. False-positives and inflated effect sizes can be reduced by increasing statistical power, which necessitates increasing group sizes. Compliance to reporting guidelines and checklists needs to be enforced by journals and funders. Customizing study designs to exploratory and confirmatory studies will leverage the complementary strengths of both modes of investigation. All studies should publish their full data sets. On the other hand, we should embrace inevitable NULL results. This entails planning experiments in such a way that they produce high-quality evidence when NULL results are obtained and making these available to the community. A collaborative effort is needed to implement some of these recommendations. Just as in clinical medicine, multicenter approaches help to obtain sufficient group sizes and robust results. Translational stroke research is not broken, but its engine needs an overhauling to render more predictive results.

Read the full article at the Publishers site (STROKE/AHA). If your library does not have a subscription, here is the Authors Manuscript (Stroke/AHA did not allow me to even pay for open access, as it is ‘a special article…’).

The Relative Citation Ratio: It won’t do your laundry, but can it exorcise the journal impact factor?

Recently, NIH Scientists B. Ian Hutchins and colleagues have (pre)published “The Relative Citation Ratio (RCR). A new metric that uses citation rates to measure influence at the article level”. [Note added 9.9.2016: A peer reviewed version of the article has now appeared in PLOS Biol]. Just as Stefano Bertuzzi, the Executive Director of the American Society for Cell Biology, I am enthusiastic about the RCR. The RCR appears to be a viable alternative to the widely (ab)used Journal Impact Factor (JIF).

Recently, NIH Scientists B. Ian Hutchins and colleagues have (pre)published “The Relative Citation Ratio (RCR). A new metric that uses citation rates to measure influence at the article level”. [Note added 9.9.2016: A peer reviewed version of the article has now appeared in PLOS Biol]. Just as Stefano Bertuzzi, the Executive Director of the American Society for Cell Biology, I am enthusiastic about the RCR. The RCR appears to be a viable alternative to the widely (ab)used Journal Impact Factor (JIF).

The RCR has been recently discussed in several blogs and editorials (e.g. NIH metric that assesses article impact stirs debate; NIH’s new citation metric: A step forward in quantifying scientific impact? ). At a recent workshop organized by the National Library of Medicine (NLM) I learned that the NIH is planning to widely use the RCR in its own grant assessments as an antidote to JIF, raw article citations, h-factors, and other highly problematic or outright flawed metrics. Continue reading