Category: Publishing

Jugdging science by proxies: A short and incomplete history

In this post I’ll be looking at the question of why scientific careers today depend so much on the Journal Impact Factor (JIF). And the acquisition of as much third-party funding as possible. Or, more generally, why the content, originality and reliability of research results are often a secondary matter when commissions talk their heads off about who to include in their own ranks. And who not. Or which grant applications deserve to be funded. In short, follow me on a brief and incomplete history of how and why we ended up judging the quality of science through proxies such as JIF and amount of third party funding. Perhaps a historical perspective will also yield clues as to how we can overcome this mess. But I am getting ahead of myself. Let’s start where it all began, with the founding fathers of modern science. Continue reading

In this post I’ll be looking at the question of why scientific careers today depend so much on the Journal Impact Factor (JIF). And the acquisition of as much third-party funding as possible. Or, more generally, why the content, originality and reliability of research results are often a secondary matter when commissions talk their heads off about who to include in their own ranks. And who not. Or which grant applications deserve to be funded. In short, follow me on a brief and incomplete history of how and why we ended up judging the quality of science through proxies such as JIF and amount of third party funding. Perhaps a historical perspective will also yield clues as to how we can overcome this mess. But I am getting ahead of myself. Let’s start where it all began, with the founding fathers of modern science. Continue reading

Peer review is dead, long live peer review!

We all have been there: After a long wait and increasing tension, an email arrives from the editotial office. With an increasing heart rate we open the email. “Thank you for submitting your manuscript to our Journal. Your manuscript was sent for external peer review, which is now complete. Based on our evaluation and the comments of external reviewers, your manuscript did not achieve a sufficient priority for further consideration, and we have decided not to pursue your manuscript for publication. While we understand you may be disappointed with this decision, we hope the reviewer comments will help you revise your manuscript and submit it to another journal. Thank you for the privilege of reviewing your work.’ After the initial shock, we take a look at the reviews in the attachment. Reviewer 1 found the work quite good, some minor issues, all fixable, with well-meaning suggestions. But Reviewer 2! Has he even read the article? Did he confuse it with another paper on his desk? In any case, this unknown ‘expert’ was totally incompetent, but still dared to pour several pages of slurry over 3 years of our hard work and its highly relevant results.

We all have been there: After a long wait and increasing tension, an email arrives from the editotial office. With an increasing heart rate we open the email. “Thank you for submitting your manuscript to our Journal. Your manuscript was sent for external peer review, which is now complete. Based on our evaluation and the comments of external reviewers, your manuscript did not achieve a sufficient priority for further consideration, and we have decided not to pursue your manuscript for publication. While we understand you may be disappointed with this decision, we hope the reviewer comments will help you revise your manuscript and submit it to another journal. Thank you for the privilege of reviewing your work.’ After the initial shock, we take a look at the reviews in the attachment. Reviewer 1 found the work quite good, some minor issues, all fixable, with well-meaning suggestions. But Reviewer 2! Has he even read the article? Did he confuse it with another paper on his desk? In any case, this unknown ‘expert’ was totally incompetent, but still dared to pour several pages of slurry over 3 years of our hard work and its highly relevant results.

From mouse to man through the valley of death?

‘Translation’ – from mouse to human and back – the mantra and eternal quest of university medicine! Where else than in academic medical centers can you find all this under one roof: basic biomedical and clinical research, the patients necessary for clinical trials, government funding, as well as motivated and excellently trained personnel! ‘Translation’ is as old as academic medicine itself – but the term for it was only coined in the 1980s and since then it has adorned the websites and mission statements of all university hospitals worldwide. Translation has certainly been a model of success – just think of, treatment of chronic neurological disorders like epilepsy, Parkinson’or multiple sklerosis, therapies of some forms of cancer, or HIV. Continue reading

‘Translation’ – from mouse to human and back – the mantra and eternal quest of university medicine! Where else than in academic medical centers can you find all this under one roof: basic biomedical and clinical research, the patients necessary for clinical trials, government funding, as well as motivated and excellently trained personnel! ‘Translation’ is as old as academic medicine itself – but the term for it was only coined in the 1980s and since then it has adorned the websites and mission statements of all university hospitals worldwide. Translation has certainly been a model of success – just think of, treatment of chronic neurological disorders like epilepsy, Parkinson’or multiple sklerosis, therapies of some forms of cancer, or HIV. Continue reading

“The Ioannidis Affair”

On March 17th, just as many countries were taking draconian measures to contain the SARS-COV-2 pandemic, the Greek-American meta-researcher and epidemiologist John Ioannidis, whom I often quote in my posts proclaimed a “fiasco in the making‘! With strong language and a few ad hoc estimations of COVID fatality rates he warned that based on poor data or no evidence at all politicians might inflict incalculable damage on society, possibly much worse than what a virus, putatatively as dangerous as influenza, could cause. As one of the most highly cited researchers in the world and a vocal critic of quality problems in biomedicine, his COVID related interviews, opinion pieces and articles since then have received a great deal of attention, in the scientific community, in the lay press, and especially among his worldwide fan base. Continue reading

On March 17th, just as many countries were taking draconian measures to contain the SARS-COV-2 pandemic, the Greek-American meta-researcher and epidemiologist John Ioannidis, whom I often quote in my posts proclaimed a “fiasco in the making‘! With strong language and a few ad hoc estimations of COVID fatality rates he warned that based on poor data or no evidence at all politicians might inflict incalculable damage on society, possibly much worse than what a virus, putatatively as dangerous as influenza, could cause. As one of the most highly cited researchers in the world and a vocal critic of quality problems in biomedicine, his COVID related interviews, opinion pieces and articles since then have received a great deal of attention, in the scientific community, in the lay press, and especially among his worldwide fan base. Continue reading

Diet: Is nutrition science a more reliable source of advice than your grandmother?

Meat consumption is bad for your health. It gives you cancer, heart attacks, stroke, you name it. Says nutrition science. And they must know. After all, it’s a science. Is it, really?

Meat consumption is bad for your health. It gives you cancer, heart attacks, stroke, you name it. Says nutrition science. And they must know. After all, it’s a science. Is it, really?

A few years ago, Jonathan Schoenfeld and John Ioannidis took a standard cookbook and randomly selected 50 frequently occurring ingredients (sugar, coffee, salt, etc.). They then carried out a systematic literature search, asking whether there were epidemiologicial studies that had investigated the cancer risk of these ingredients. And they found what they were looking for. For 80% of the ingredients at least one study existed, for many even several. Of 264 of these studies, 103 found that the ingredient investigated increased the risk of cancer, while 88 reduced the risk! So after all Joe Jackson was right: ‘Everything gives you cancer’! But wait a minute: Milk? Veal? Orange juice? Continue reading

When carmakers hack brains

You got to see this youtube video! Hectically cut sequences of busy young scientists in high-tech laboratories wearing lab coats, nerdy looking guys are soldering electronic circuits and stare into oscilloscopes, we are taken on a roller coaster ride through an animated brain chockful of tangled nerve cells. And in between all this, on stage at the California Academy of Sciences, car and rocket manufacturer Elon Musk announces his latest vision in a messianic pose: The symbiosis of the human brain with artificial intelligence (AI)! This time his plan to save mankind does not involve mass evacuation to Mars, but will be realized by a revolutionary Brain Machine Interface (BMI), designed and manufactured by his company Neuralink. You may have guessed it, this has caused a tremendous media hype all over the world. The verdict in the press and on the net was: “Musk at his best, a bit over the edge, but if HE announces a breakthrough like that there must be something to it”. The more cautious asked: “But couldn’t this be dangerous for mankind? Do we need a new ethic for stuff like this?” Continue reading

You got to see this youtube video! Hectically cut sequences of busy young scientists in high-tech laboratories wearing lab coats, nerdy looking guys are soldering electronic circuits and stare into oscilloscopes, we are taken on a roller coaster ride through an animated brain chockful of tangled nerve cells. And in between all this, on stage at the California Academy of Sciences, car and rocket manufacturer Elon Musk announces his latest vision in a messianic pose: The symbiosis of the human brain with artificial intelligence (AI)! This time his plan to save mankind does not involve mass evacuation to Mars, but will be realized by a revolutionary Brain Machine Interface (BMI), designed and manufactured by his company Neuralink. You may have guessed it, this has caused a tremendous media hype all over the world. The verdict in the press and on the net was: “Musk at his best, a bit over the edge, but if HE announces a breakthrough like that there must be something to it”. The more cautious asked: “But couldn’t this be dangerous for mankind? Do we need a new ethic for stuff like this?” Continue reading

Could gambling save science?

U.S. economist Robin Hanson posed this question in the title of an article published in 1995. In it he suggested replacing the classic review process with a market-based alternative. Instead of peer review, bets could decide which projects will be supported or which scientific questions prioritized. In these so-called “prediction” markets, individuals stake “bets” on a particular result or outcome. The more people trade on the marketplace, the more precise will be the prediction of outcome, based as it is on the aggregate information of the participants. The prediction market thus serves the intellectual swarms. We know that from sport bets and election prognoses. But in science? Sounds totally crazy, but it isn’t. Just now it is making its entry into various branches of science. How does it function, and what does it have going for it? Continue reading

U.S. economist Robin Hanson posed this question in the title of an article published in 1995. In it he suggested replacing the classic review process with a market-based alternative. Instead of peer review, bets could decide which projects will be supported or which scientific questions prioritized. In these so-called “prediction” markets, individuals stake “bets” on a particular result or outcome. The more people trade on the marketplace, the more precise will be the prediction of outcome, based as it is on the aggregate information of the participants. The prediction market thus serves the intellectual swarms. We know that from sport bets and election prognoses. But in science? Sounds totally crazy, but it isn’t. Just now it is making its entry into various branches of science. How does it function, and what does it have going for it? Continue reading

Your Lab is Closer to the Bedside Than You Think

With a half-page article written about him and his study, an Israeli radiologist unknown until then made it into the New York Times (NYT 2009). Dr. Yehonatan Turner presented computer-tomographic scans (CTs) to radiologists and asked them to make a diagnosis. The catch: Along with the CT a current portrait photograph of the patient was presented to the physicians. Remember, radiologists very often do not see their patients, they make their diagnosis in a dark room staring at a screen. Dr. Turner in his study used a smart cross-over design: He first showed the CT together with a portrait photograph of the patient to one group of radiologists. Three months later the same group had to make a diagnosis using the same CT, but without the photo. Another group of radiologists were first given only the CT and then, three months later the CT with photo. A further control group examined only the CTs, as in routine practice. The hypothesis: When a radiologist is exposed to the individual patient, and not only to an anatomical finding on a scan, she will be more conscious of her own responsibility, hence findings will be more thorough and diagnosis more accurate. And in fact, this is what he found. The radiologists reported that they had more empathy with the patient, and that they “felt like doctors”. And they spotted more irregularities and pathological findings when they had the CT and photo in front of them than when they were only looking at the CT (Turner and Hadas-Halpern 2008).

With a half-page article written about him and his study, an Israeli radiologist unknown until then made it into the New York Times (NYT 2009). Dr. Yehonatan Turner presented computer-tomographic scans (CTs) to radiologists and asked them to make a diagnosis. The catch: Along with the CT a current portrait photograph of the patient was presented to the physicians. Remember, radiologists very often do not see their patients, they make their diagnosis in a dark room staring at a screen. Dr. Turner in his study used a smart cross-over design: He first showed the CT together with a portrait photograph of the patient to one group of radiologists. Three months later the same group had to make a diagnosis using the same CT, but without the photo. Another group of radiologists were first given only the CT and then, three months later the CT with photo. A further control group examined only the CTs, as in routine practice. The hypothesis: When a radiologist is exposed to the individual patient, and not only to an anatomical finding on a scan, she will be more conscious of her own responsibility, hence findings will be more thorough and diagnosis more accurate. And in fact, this is what he found. The radiologists reported that they had more empathy with the patient, and that they “felt like doctors”. And they spotted more irregularities and pathological findings when they had the CT and photo in front of them than when they were only looking at the CT (Turner and Hadas-Halpern 2008).

So how about showing researchers in basic and preclinical biomedicine photos of patients with the disease they are currently investigating in a model of the disease? Continue reading

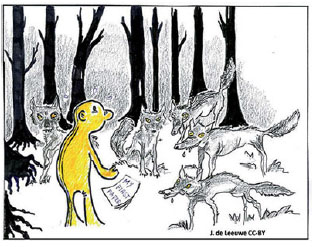

Predators in the (paper) forest

It struck at the end of July. A ‘scandal’ in science shook the Republic. Research by the NDR (Norddeutscher Rundfunk), NDR (Westdeutscher Rundfunk) and the Süddeutsche Zeitung revealed that German scientists are involved in a “worldwide scandal”. More that 5000 scientists in German universities, institutes and federal authorities had, with public funds, published their work in on-line pseudoscientific publishing houses that do not comply with the basic rules and for assuring scientific quality. The public and not just a few scientists heard for the first time about “predatory publishing houses” and “predatory journals”.

It struck at the end of July. A ‘scandal’ in science shook the Republic. Research by the NDR (Norddeutscher Rundfunk), NDR (Westdeutscher Rundfunk) and the Süddeutsche Zeitung revealed that German scientists are involved in a “worldwide scandal”. More that 5000 scientists in German universities, institutes and federal authorities had, with public funds, published their work in on-line pseudoscientific publishing houses that do not comply with the basic rules and for assuring scientific quality. The public and not just a few scientists heard for the first time about “predatory publishing houses” and “predatory journals”.

Predatory publishing houses, whose presentation in phishing mails is quite professional, offer scientists Open Access (OA) publication of their scientific studies at a cost, whereby they imply that their papers will be peer reviewed. No peer review is carried out, and the articles are published on the web site of these “publishing houses”, which however are not listed in the usual search engines such as PubMed. Every scientist in Germany finds several such invitations per day in his or her e-mails. If you are a scientist and receive none, you should be worried about it. Continue reading

No scientific progress without non-reproducibility?

Slides of my talk at the FENS Satellite-Event ‘How reproducible are your data?’ at Freie Universität, 6. July 2018, Berlin

Slides of my talk at the FENS Satellite-Event ‘How reproducible are your data?’ at Freie Universität, 6. July 2018, Berlin

- Let’s get this out of the way: Reproducibility is a cornerstone of science: Bacon, Boyle, Popper, Rheinberger

- A ‘lexicon’ of reproducibility: Goodman et al.

- What do we mean by ‘reproducible’? Open Science collaboration, Psychology replication

- Reproducible – non reproducible – A false dichotomy: Sizeless science, almost as bad as ‘significant vs non-significant’

- The emptiness of failed replication? How informative is non-replication?

- Hidden moderators – Contextual sensitivity – Tacit knowledge

- “Standardization fallacy”: Low external validity, poor reproducibility

- The stigma of nonreplication (‘incompetence’)- The stigma of the replicator (‘boring science’).

- How likely is strict replication?

- Non-reproducibility must occur at the scientific frontier: Low base rate (prior probability), low hanging fruit already picked: Many false positives – non-reproducibility

- Confirmation – weeding out the false positives of exploration

- Reward the replicators and the replicated – fund replications. Do not stigmatize non-replication, or the replicators.

- Resolving the tension: The Siamese Twins of discovery & replication

- Conclusion: No scientific progress without nonreproducibility: Essential non-reproducibility vs . detrimental non-reproducibility

- Further reading

Open Science Collaboration, Psychology Replication, Science. 2015 ;349(6251):aac4716

Goodman et al. Sci Transl Med. 2016;8:341ps12.

https://dirnagl.com/2018/05/16/can-non-replication-be-a-sin/

https://dirnagl.com/2017/04/13/how-original-are-your-scientific-hypotheses-really